[Blog] Using the Time-Triggered approach for a better design of safety critical Real-Time Systems

- oct

- 2017

- Posted in Blog

ASTERIOS is way more than an innovative Real-Time Kernel (RTK). It does come with an efficient micro-kernel and some services, which are carefully implemented and optimized to offer the best performances and safety guarantees. But it turns out the RTK constitutes only a small part of the code base that we maintain at KRONO-SAFE.

Beyond the original Integrated Development Environment and the simulation and debugging tools, ASTERIOS offers a rather unique multi-task, parallel Real-Time Programming Model that we call Psy (as in Parallel SYnchronous: no link with Korean pop music whatsoever). This model enforces several safety-oriented properties; and in order to actually write applications that comply with this model, we’ve come up with a dedicated programming language, built upon the legacy C language, and that we’ve called Psy-C.

This post is about the properties of our Programming Model, and the benefits we see in designing real-time applications in Psy.

Time-Triggered vs Event-Triggered

Embedded Real-Time Systems are usually designed based on either one of two pillar-concepts: the Event-Based, or the Time-Triggered design approach:

- an event-based system reacts to the occurrence of asynchronous events in the system, such as an external interrupt (e.g. when a network device receives a packet), or the release of a shared resource (e.g. release of a mutex/semaphore/lock of any kind);

- conversely, in a time-triggered system the observable state of the system can only change at specific, physical dates, which are known prior to execution.

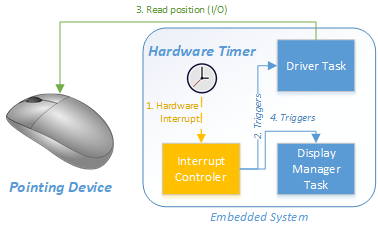

We can illustrate both approaches with a simple example of a pointing device driver task (e.g. a mouse driver). In an event-based system, when the pointing device detects a change of position, an interrupt request is sent to the processor, triggering the execution of the driver task; the task will then most likely use a lock-based inter-process communication layer to notify a display server of the new position of the pointer:

In a time-triggered system, the interrupt channel of the device would be disabled, and a single real-time timer would provide a periodic « tick », giving the heartbeat to the whole system. The driver task would be activated by this tick, would poll the device for a change of position (for instance every 10ms), and issue a message to the display server when necessary:

At first sight, one could object that the time-triggered solution seem to be less efficient: first, the task is executed periodically even if the device has not moved, inducing a CPU overhead. Second, the polling induces a latency of up to 10ms before an input is taken into account. But these points are actually assets when designing a critical real-time system:

- Because the driver task is only executed periodically, the system designer knows in advance the exact CPU load required by the driver task. Noticeably, it means that the system cannot be overloaded by an interrupt storm, which is one of the oldest concerns when it comes to safety of real-time systems.

- The induced latency actually also gives an upper-bound of the longest time that can elapse before an input is taken into account, since the driver task will run exactly once every 10 ms. In addition, the fact that the observable state of the system (namely the interpreted position of the pointer) can change only every 10 ms eases reproducibility, thus making the system easier to test.

The Time- vs Event-Triggered war goes one for some decades now in the world of embedded real-time systems; the truth being of course that both models have their advantages and their drawbacks. The event-based approach is considered to be better suited for non-critical, low-latency real-time systems, whereas time-triggered designed systems offer better reproducibility and determinism. Thus, at KRONO-SAFE, we’ve tried to take the best of both worlds: the roots of the Psy programming model are Time-Triggered, and we’ve built some extensions to allow to some extent the intervention of external event with low-latency.

In this post, we’ll focus only on the time-triggered approach used in ASTERIOS, and the key benefits it provides.

The Psy Programming Model: Expressing Temporal Behavior

The Psy programming model is parallel and multi-task, meaning that an application can be made of several execution threads, that are executed simultaneously. A sequential execution unit is called an Agent, which can be seen as a real-time declination of a UNIX process.

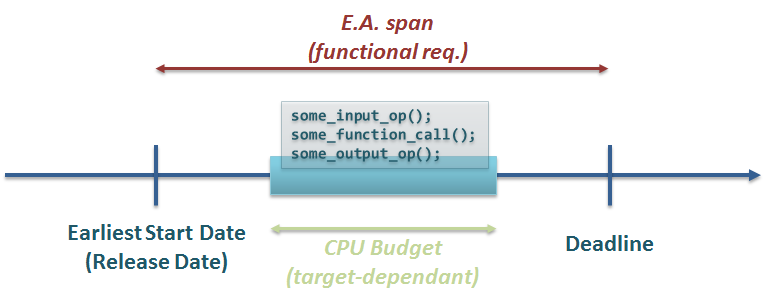

An Agent is an infinite succession of time-constrained Elementary Actions. The Elementary Action or E.A is the building block of a Psy application, and is defined by:

- a list of instructions to execute sequentially (e.g. a call to a C function, an assembly routine,…);

- an Earliest Start Date (ESD) and a Deadline (both absolute, physical dates);

- a CPU budget, basically the actual CPU time granted to complete the execution of the aforementioned set of instructions.

The Psy programming model allows for any scheduling policy, preemptive or not, as long as this policy ensures that the actual execution of each E.A remains bounded after the Earliest Start Date and before the Deadline; thus, the actual execution can be performed in several steps. For instance, the figure below shows the same E.A, with the same CPU budget, but with a different scheduling: the execution is preempted twice during the course of the E.A:

Allow us to emphasize the fact that, with respect to the time-triggered principle, there can be no additional constraint on the execution of an E.A: it can be executed if and only if ESD < = t < Deadline, where t is the current physical time. There can be no additional synchronization: the task cannot be blocked waiting for a lock, or waiting for an external interrupt other than the timer tick that marks the Earliest Start Date.

![]()

Going further: Semantics of an Elementary Action

We have just seen that, when defining an Elementary Action, the developer has to specify two time duration: the E.A. span, and the CPU budget provided to execute the code of this E.A. This may come as a surprise at first: in fixed priority systems for instance, the developer usually tends to worry only about the execution time (that is, the CPU budget) of each job. But we precisely believe that this is the source of lots of misconceptions and poor design choices of hard real-time system.

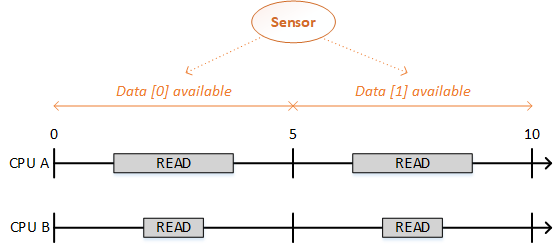

There is a fundamental semantic difference between the span of an E.A and the CPU budget. The span of an Elementary Action is set by a functional requirement, whereas the CPU budget is an entirely target-dependent value, that should be greater than or equal to the worst-case execution time (WCET) of the set of instructions to be executed.

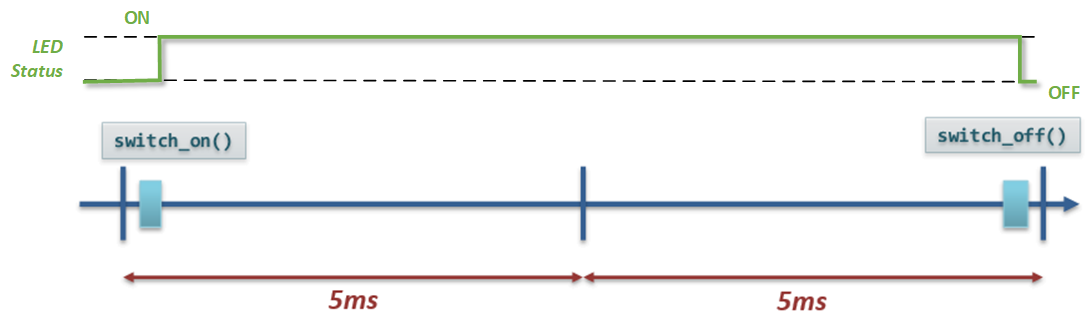

For example, consider a driver that fetches some data from a sensor, from which a new read is available every 5 ms. That’s a functional requirement: the driver should fetch a new value every 5 ms, no matter how fast the CPU can actually read the register from the peripheral. Said otherwise: suppose you’ve written your driver for some model of CPU A , for which you’ve set the CPU budget to 2.5 ms; and say you should port your application for a new model of CPU B, twice as fast: then you probably will adjust the CPU budget to 1.25 ms; the span of the E.A however shall remain to 5 ms:

One last note regarding the evaluation of WCETs: several industrial-grade tools are entirely dedicated to the evaluation of WCETs, and ASTERIOS Developer does not aims at becoming one of them. We do provide however profiling tools to reasonably estimate CPU budgets of each Elementary Action, based on multiple executions on target – but that’s for another post.

Illustration with the Mother of all Examples

Let us proceed to the unsurpassable horizon of any embedded system development, using the ASTERIOS programming model: let’s make a LED blink!

Necessary disclaimer: this example is not as ridiculously naive as it seems – besides you can easily transpose it to a number of textbook Instrumentation & Control functions (PWM regulation for instance).

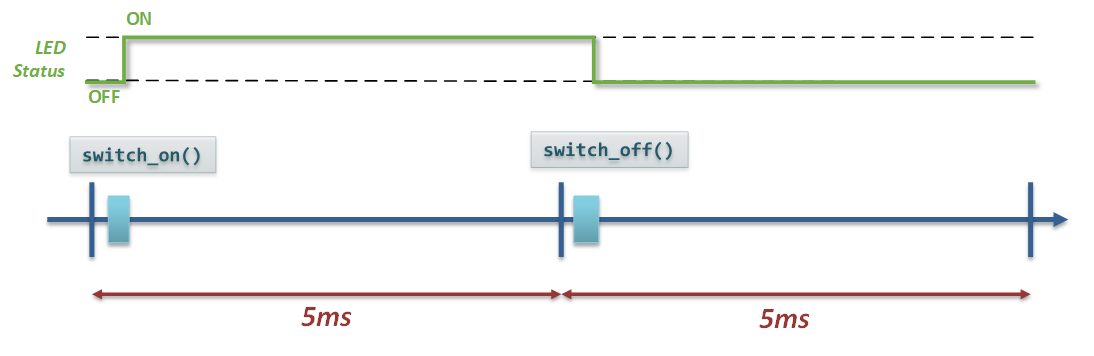

So, suppose our Software Requirements Specifications (SRS) states that « The LED shall blink periodically, with a period of 10 ms and a duty cycle of .5« . In layman’s terms, the LED shall be half-time on, half-time off, every 10 ms.

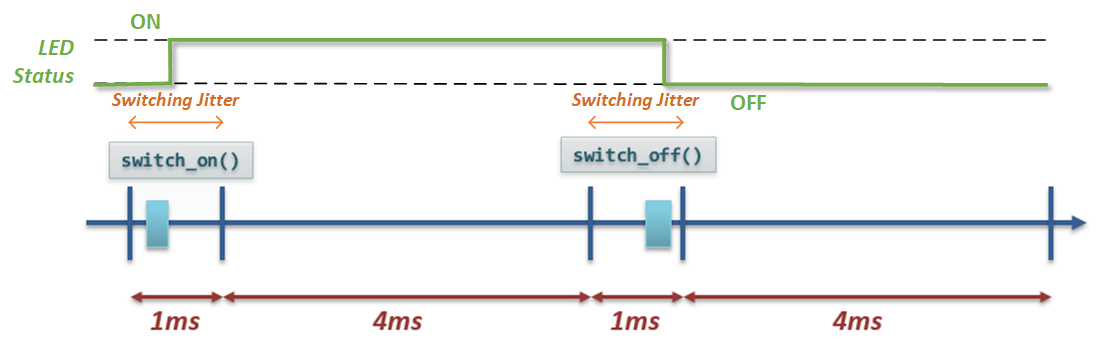

It would seem reasonable to translate this in Psy with two Elementary Actions of span 5 ms, executed indefinitely: the first one would switch the LED on, and the second one would switch it off. Notice already that the CPU budgets were not specified by the SRS: a requirement should have stated for instance that the CPU load to run this task shall not be greater than 1 ms every 10 ms. Thus, let us grant 500 microseconds at most for executing each function switch_on() and switch_off(). A representation of one cycle on a timeline gives:

We can already see the catch: the Psy programming model does not give any guarantee on when the code is actually executed within the Elementary Action. Therefore, we have written a task that could very well be scheduled like this:

In that case, the LED will remain on almost all the time; other scheduling policies could also lead the LED to be off all the time. Little to say that the requirement is not satisfied.

If we try to fix the implementation, we need to write a cycle of 4 successive Elementary Actions:

And that would actually be the right approach. Notice how the switch_on() and switch_off() calls have been encompassed in smaller Elementary Actions. The SRS should have specified the span of these time windows.

To be more precise, the specification fails to define the acceptable jitter for switching the LED on and off: there is no such thing as « instantaneous » in any physical system, and the specification should definitely take this into account.

To sum it up, a correct specification should look like this:

- The system shall be 10 ms-periodic;

- The overall duty cycle shall be comprised between .4 and .6. Over a cycle of 10ms:

- the LED shall be switched on between 0 and 1 ms;

- the LED shall be switched off between 5 and 6 ms.

- The total CPU time needed to switch the LED on resp. off shall be monitored and not exceed 500 microseconds.

Some additional requirements should be provided to specify the behavior in case one of these constraints cannot be met during execution (health monitoring).

![]() A common mistake when tying to refine this specification is to state that the LED should be switched on and off « as soon as possible » after t=0 and t=5ms.

A common mistake when tying to refine this specification is to state that the LED should be switched on and off « as soon as possible » after t=0 and t=5ms.

First of all, this requirement is only partial: it fails to specify a deadline, i.e. a date after which, if the LED has not been switched, an error should be raised.

However, on a system where this task is the only one to be executed, « as soon as possible » may seem a reasonable requirement. Yet, this « best effort » approach is a bad idea for scalability: when adding a dozen of new tasks to the system, « as soon as possible » implies priorities between tasks. Although this approach is formally correct, it turns the issue into a static scheduling problem for the programmer.

ASTERIOS Developer precisely allows to avoid the static priorities nightmare by providing an automatic offline scheduler for PsyC applications – but that’s for another post.

Conclusion

We hope to have shown with this apparently naive example, that specifying a « hard » real-time behavior actually requires more than it seems; and we believe that the Psy programming model is the right way to do it. The Psy programming model forces the designer to fully specify the temporal behavior of the tasks (Earliest Start Dates, Deadlines, CPU budgets), which can then be implemented in PsyC in ASTERIOS Developer.

In this post we have only scratched the surface of the possibilities and the expressiveness offered by the Psy Programming model and the time-triggered approach. Among other things, it enforces deterministic communications (as we’ve shown in a previous post), it enables reproducible simulation on workstations, and it ultimately greatly facilitates porting an application for a target hardware to another – even multi-core. In the upcoming posts, we’ll illustrate the expressiveness of the PsyC language, and the complex temporal behavior that you can describe with ASTERIOS while still preserving determinism and reproducibility.

About the author

Emmanuel Ohayon is a Software Architect at KRONO-SAFE since 2014; he has contributed to the roots of ASTERIOS technology (compiler and generic part of the Real-Time Kernel) as head of the Core Team. Currently leads some dark R&D secret projects that aims to make ASTERIOS rule the World of RTOSes, in null-latency. Loves to speak of himself in the third person. Before KRONO-SAFE, he was a Research Engineer at CEA (French Alternative Energies and Atomic Energies Commission).