[Blog] Deterministic Real-Time Communication Paradigm

- mai

- 2017

- Posted in Blog

Determinism is quite the Eldorado when it comes to real-time, safety-critical systems. However the definition of a deterministic system is paradoxically quite debatable: the most common formulation is a system which, given the same set of inputs and initial state, will always produce the same outputs. The problem of that definition is that, depending on the extend of what one considers to be the inputs and outputs of the system, determinism can be either trivially easy, or next to impossible to achieve.

We bring a pragmatic answer to this issue: ASTERIOS® enables to build by construction deterministic, multi-task real-time systems, in the sense that the observable input and outputs of each task will always be the same. These observable input/outputs are any data exchanged through an inter-task communication layer specifically designed to enforce such property. In this post, we present the paradigm that rules any communication in an ASTERIOS® application, and show how it improves reproducibility and testability both at the task level and system-wide, and regardless of the target architecture (be it single or multicore).

Turning a simple example into a Nightmare

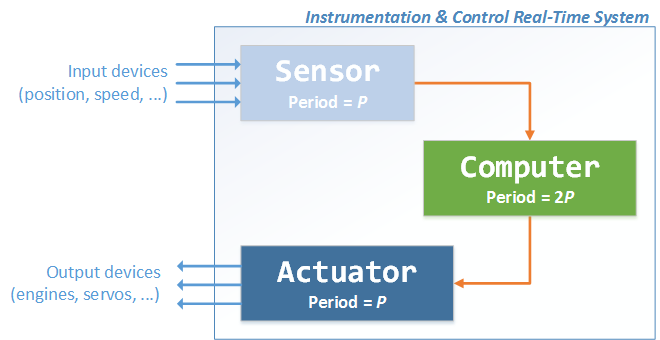

Consider a basic real-time Instrumentation & Control loop, such as a PID controller. We’ll split the software in three independent, periodic tasks, with the following temporal constraints:

- a P-periodic task called Sensor, in charge of reading various sensor devices and pre-process their values (e.g. apply a Kalman filter on the outputs of an embedded Inertial Measurement Unit);

- a 2P-periodic task called Computer, in charge of the actual correction computation;

- a P-periodic task called Actuator, in charge of commanding servo-motors (you know…actuation stuff).

For the sake of the example, let’s assume that we are using a real-time, preemptive operating system, that provides a 1-to-1 inter-task communication mechanism such that:

- data coherency is enforced;

- every read() operation returns the most recent data available (e.g. a non-blocking LIFO).

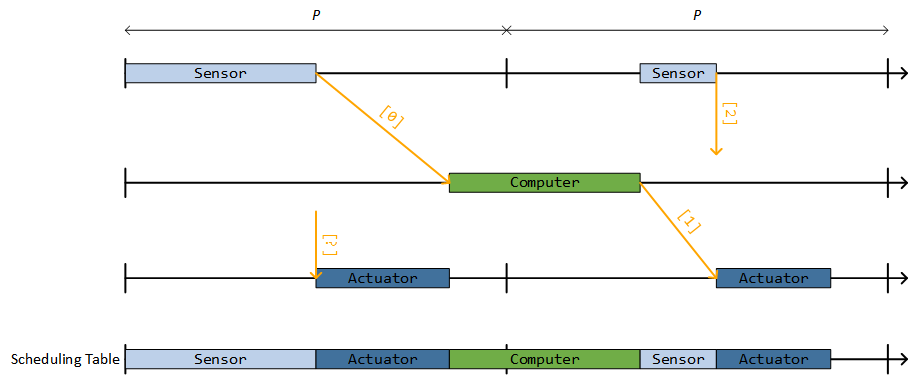

We can already see that with no further specification of the expected behavior, it is difficult to tell which data will be consumed by each task, at every cycle. To be more precise, we need to know the exact scheduling of the application for that. The following timelines show an admissible scheduling plan for our application, over two consecutive cycles of length P. The execution of a task instance is represented by a horizontal colored box; data read (resp. published) by each instance are represented by incoming (resp. outgoing) orange arrows. The data exchanged is represented by an integer value between brackets.

With this scheduling, during the first cycle Sensor sends [0] to Computer, which in turn sends [1] to Actuator; then, during the second cycle Sensor produces [2], but there is no instance of Computer to make use of it (yet). Then, Actuator reads the latest value published by Computer, which was [1].

As the Scheduling Table timeline clearly shows, this plan assumes that during the first cycle of length P, one instance of each task Sensor(S), then Computer(C) then Actuator(A) can be executed, thus allowing the fastest overall response time. Unfortunately, the behavior of this system heavily depends on the execution time of each task. Imagine for instance that tasks occasionally take more time to execute: all three task instances may not fit in the first cycle, forcing to move the execution of Computer after Actuator:

You can see that in this case, the instance of Actuator during the first cycle does not read the same input value as in the previous case (since Computer has not ran yet, to feed it with data). Said otherwise, the response time of the whole system is delayed, since Actuator will issue a valid control output only during the second cycle.

It would be wrong to believe that the issue is « only » a matter of evaluating the worst-case execution times of our tasks. In the example we have given, using a static scheduling plan does indeed make the communication deterministic. But, consider what happens when you need to extend the system and schedule another task, say 2P-periodic; or if you need to go multi-core, and execute Computer on one core, while simultaneously running Sensor and Actuator on another core… The whole scheduling plan needs to be re-worked, and the data exchanged between tasks may change completely. All these issues are a very well-known source of headache for any system integrator: adding a new task, or even slightly changing a CPU budget granted to a task in a static scheduling plan often implies cascading consequences on the whole system, its test suites, and its certification credentials.

This simplistic example shows how communication determinism can be very hard to achieve, and even harder to conceal with system modularity and global reusability.

A Deterministic Communication Paradigm

ASTERIOS® offers a communication paradigm between tasks that ensures that the messages exchanged between the agents will always remain the same, in all the practical cases that we have presented above. It relies on a very simple, yet powerful principle that we call the Visibility Principle:

- any data produced by a task can be made visible to the rest of the system only after the deadline (i.e. latest ending date) of that instance;

- conversely, a task instance may only read data that was already visible at the release date (i.e. earliest starting date) of that instance.

Illustration with a Minimalist Example

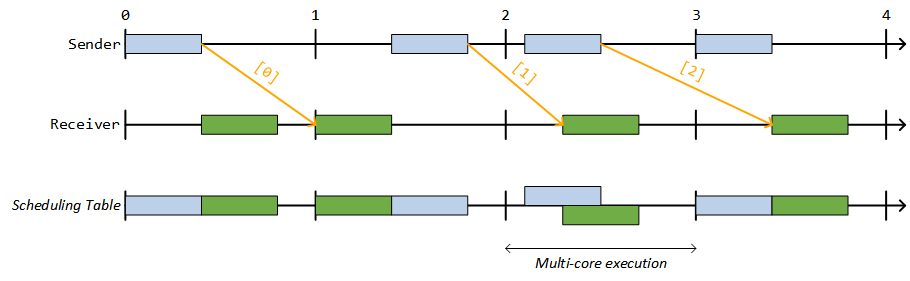

The following figure shows the scheduling two communicating tasks Sender and Receiver, both 1-periodic:

The data produced by Sender during [0, 1] is visible by Receiver only during [1, 2], and so on. Notice that we’ve purposely represented a chaotic scheduling: on the first cycle, Sender runs before Receiver; during the second cycle, Receiver runs before Sender, and during the third cycle, both tasks run simultaneously (illustrating e.g. a multi-core execution). The Visibility Principle applies in any case, and ensures complete reproducibility: for two separate runs of the application, the same instances of Sender and Receiver will always read/publish the same values during the same cycles, no matter the scheduling variations that may occur within these cycles.

Back to the PID Loop

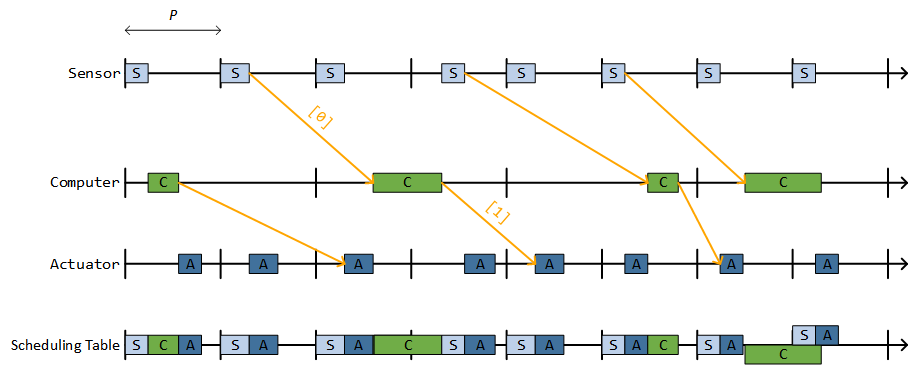

Applying the Visibility Principle to our original example gives the following scheduling, expanded on 8 cycles of length P for the sake of the example, with varying scheduling policies and execution times:

You may verify that the system is completely deterministic, meaning that all the task instances will always read the same inputs and the same outputs. They remains insensitive to any interference such as execution time variation, and more generally to the scheduling policy: be it static, dynamic, mono-core, multi-core, or pretty much anything you can imagine. Communication simply do not depend on anything else than the abstract temporal behavior that we have originally expressed in the previous section, namely: Sensor and Actuator are P-periodic, and Computer is 2P-periodic – nothing more.

Notice that the global end-to-end delay is now always of 4P. Meaning, the latency between the time when Sensor detects some change in the environment, and the time when Actuator issues a corrective action that takes this change into account can take up to 4P. See e.g. the message exchange Sensor → [0] → Computer → [1] → Actuator.

About the Implementation in ASTERIOS®

The communication layer provided by the ASTERIOS® Real-Time Kernel (RTK) implements the visibility principle exposed above. Besides, the code of the communication layer is entirely wait-free and re-entrant, meaning that it can be preempted, or executed simultaneously on multiple cores transparently.

This implementation enables to actually deduce the CPU time used for communication from the tasks budgets: in ASTERIOS®, unlike synchronous models, communication do not occur in null-time. To put it in other words: the communication layer is always executed on behalf of the communicating task, which « lends » its resources for that purpose (CPU time and memory).

When the hardware target provides an MPU or an MMU, the communication layer of ASTERIOS® is completed by automatic spatial isolation of the tasks, thus ensuring that tasks may only exchange data through the communication layer. This isolation, coupled with the application of the visibility principle exposed above allows to formally prove communication determinism, as it was defined in introduction. This formal proof however is out of scope of this post.

Conclusion

The deterministic communication paradigm presented in this post is natively implemented by ASTERIOS®, and enforces predictability and reproducibility for multi-task real-time applications. Complete reproducibility induces that, if the application implements e.g a health monitoring task, and if this task triggers a warning during one run, then the exact same warning shall always be triggered, during the exact same cycle, for every run of the application. More generally, the data exchanged between the tasks will always remain the same from one run to another, independently from execution time variations, or even from the chosen scheduling policy. And on top of this, this property is achieved on single and multi-core platforms, with no additional migration cost.

This approach enables seamless system-scale integration, by drastically reducing the costs induced by common operations such as adding/removing tasks form the application, or changing the CPU budgets granted to tasks. Each task of the system can (and should!) be specified and implemented independently from each other, focusing only on the functional need that each task addresses – not on the consequences it may or may not have on other tasks that it does not even directly interacts with.

There is however one drawback: as we’ve seen with the I&C example, a side-effect of the Visibility Principle is that it delays data exchanges, thus increasing end-to-end delays throughout the system. This can be an issue, especially for the stability of control loops. Luckily, one of the latest extensions of the ASTERIOS® programming model brings a solution to this problem, by enabling null-latency communication while still preserving the time-triggered approach, and communication determinism. But, that’s for another post…

And One Final Note…

We want to stress out that the examples we’ve used in this post are ridiculously simple compared to what the ASTERIOS® programming model authorizes. There is absolutely no restriction on the temporal behavior of the communicating task: they don’t need to be synchronized, nor harmonic – actually tasks don’t need to be periodic at all: their temporal behavior may even be data-dependent. Communication determinism is enforced in all cases. The expressiveness offered by the real-time programming model behind ASTERIOS® is one of its great strengths, and it shall be the topic of yet another post – so stay tuned!

About the author

Emmanuel Ohayon is a Software Architect at KRONO-SAFE since 2014; he has contributed to the roots of ASTERIOS technology (compiler and generic part of the Real-Time Kernel) as head of the Core Team. Currently leads some dark R&D secret projects that aims to make ASTERIOS rule the World of RTOSes, in null-latency. Loves to speak of himself in the third person. Before KRONO-SAFE, he was a Research Engineer at CEA (French Alternative Energies and Atomic Energies Commission).